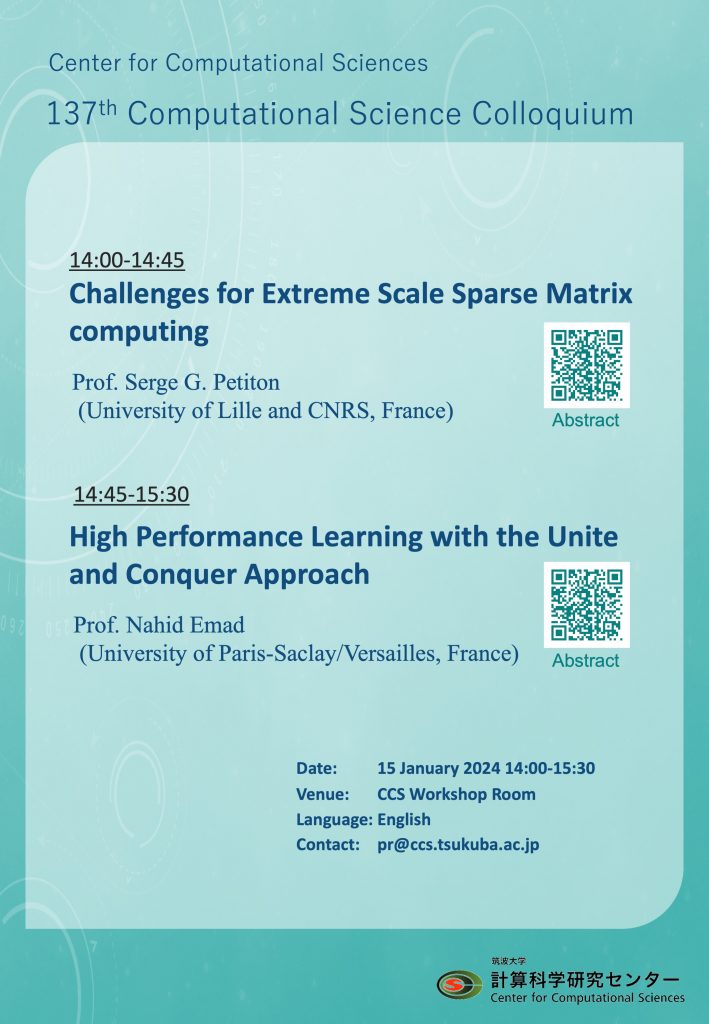

第137回計算科学コロキウムを開催いたします。多数のご来聴をお待ちしております。

概要: 第137回計算科学コロキウム

日時: 2024年1月15日(月) 14:00-15:30

場所: 計算科学研究センター ワークショップ室

言語: English

| 14:00-14:45 | Challenges for Extreme Scale Sparse Matrix computing 【Abstract】 |

| Prof. Serge G. Petiton (University of Lille and CNRS, France) | |

| 14:45-15:30 |

High Performance Learning with the Unite and Conquer Approach 【Abstract】 |

|

Prof. Nahid Emad (University of Paris-Saclay/Versailles, France) |

Exascale machines are now available, based on several different arithmetic (from 64-bit to 16- 32 bit arithmetics, including mixed versions and some that are no longer IEEE compliant) and using different architectures (with network-on-chip processors and/or with accelerators). Brain-scale applications, from machine learning and AI for example, manipulate huge graphs that lead to very sparse non-symmetric linear algebra problems. Moreover, those supercomputers have been designed primarily for computational science, mainly numerical simulations, not for machine learning and AI. New applications that are maturing after the convergence of big data and HPC to machine learning and AI would probably generate post- exascale computing that will redefine some programming and application development paradigms. End-users and scientists have to face a lot of challenge associated to these evolutions and the increasing size of the data.

In this talk, after a short description of some recent evolutions having important impacts on our results, in particular about programming paradigms. I present some results obtained on the still #1 supercomputer of the HPCG list, Fugaku, for sequences of sparse matrix products, with respect to the sparsity and the size of the matrices, on the one hand, and to the number of process and nodes, on the other hand. Then, I introduce two opensource generators of very large data, allowing to evaluate several methods using very large graph-sparse matrices as data sets for several application evaluations.

The ever-increasing production of data requires new methodological and technological approaches to meet the challenge of their effective analyses. We highlight the omnipresence of certain linear algebra methods such as the eigenvalue problem or more generally the singular value decomposition in machine learning techniques. A new machine learning approach based on Unite and Conquer methods, used in linear algebra, will be presented. The important characteristics of this intrinsically parallel and scalable technique make it very well suited to multi-level and heterogeneous parallel and/or distributed architectures. We also highlight the very strong interactions between machine and deep learning methods and sparse linear algebra and present a promising approach in this area. Experimental results demonstrating the interest of these approaches for efficient data analysis in the case of clustering, cybersecurity and road traffic simulation will be presented.

世話人: 朴泰祐