Chief

|

KAMEDA Yoshinari, Professor Yoshinari Kameda received his Bachelor of Engineering degree, his Master of Engineering, and his Doctor of Philosophy from Kyoto University in 1991, 1993, and 1999. He was an assistant professor at Kyoto University until 2003. He was a visiting scholar in AI Laboratory at MIT in 2001-2002. In 2003, he joined University of Tsukuba and is now a full professor. Dr. Kameda’s research interests include intelligent enhancement of human vision, mixed reality, video media processing, computer vision, and intelligent support for handicapped people and smart transportation society. |

Overview

Our group aims to establish a new paradigm of computational media that can be used in our science study and our surrounding environment. On human-computer interaction, process speed and data flow speed should be fit to humans. This means any computational media should count the response time of humans and their ability to understand information provided from computer side. Our research covers the framework of computational science research scenes, human society system, daily life situations, and city-size environments. Our ultimate goal is to build a new, human-friendly, and intelligent media on the basis of computational sciences.

Research topics

- Expansion of research areas to human society, living space, and city environment

- Intelligent and human-friendly feedback method to show the unified shape of real observation data and simulation result

- Computer vision and image processing technologies on computational medical science

- Advanced application of computational media on sport performance analysis and skill development

We have been working on visual activity analysis on sport scenes. One of the example is to analyze the human visual behavior on deciding the pass course under a pressured scene in soccer game situation. The figure shows one of our prototype systems on which a subject can see the virtual soccer where any motion are being measured.

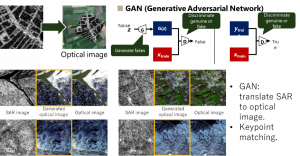

One of our city-size research works is the image alignment between multi-modal images using advanced computer vision techniques. Since the sensors and their images have different intrinsic properties, a special intelligent approach should be invented to achieve the precise alignment. Out advanced computer vision technologies can make it possible.

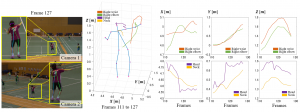

As for the sport applications, one of our research works is focusing on the very precise investigation of 3D human pose estimation on badminton games. We have been conducting a joint-research work with Japanese national badminton team.

Web sites

Computer Vision and Image Media Laboratory

(Update: 2019 Dec. 18)